High-Frequency Settlement Engine

C / Go / PostgreSQL / Docker / Protobufs

Execution Architecture

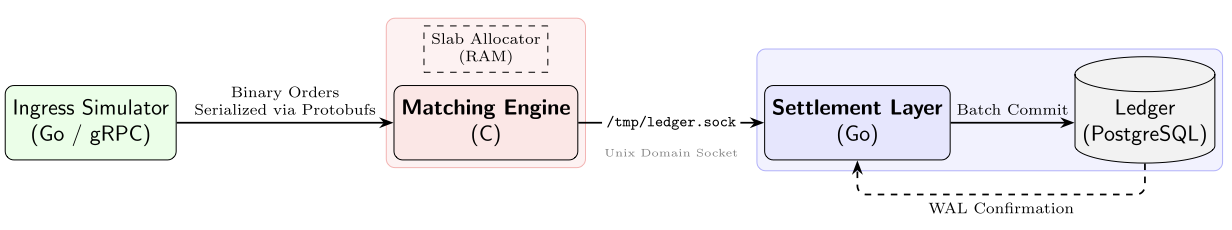

This project investigates the engineering tradeoffs involved in building a low-latency trading system that also guarantees financial correctness. We implemented a high-throughput matching engine in C and a settlement gateway in Go, connecting them via Unix Domain Sockets with Protobuf framing. Key techniques include a custom slab allocator for deterministic allocation (reducing latency and its jitter), and a group-commit protocol to batch database writes (necessary when writing to disk at high TPS) without sacrificing ACID guarantees.

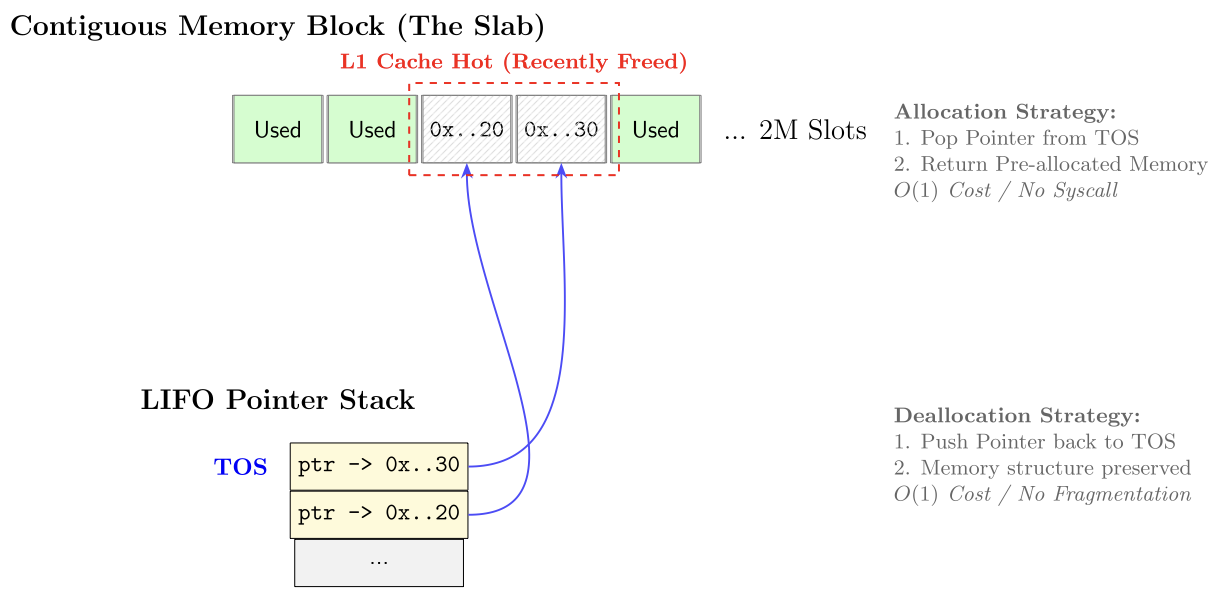

The system architecture decouples low-latency matching from high-integrity settlement. The execution core is implemented in C to maintain deterministic sub-millisecond response times. To eliminate jitter caused by syscalls and heap fragmentation with respect to malloc(), we implemented a custom slab allocator. At startup, the engine requests a contiguous 128MB memory block and organizes it into a LIFO pointer stack. Incoming orders are assigned to pre-warmed memory slots, ensuring O(1) allocation and deallocation while maximizing CPU L1 cache locality during the matching loop.

Persistence & ACID Compliance

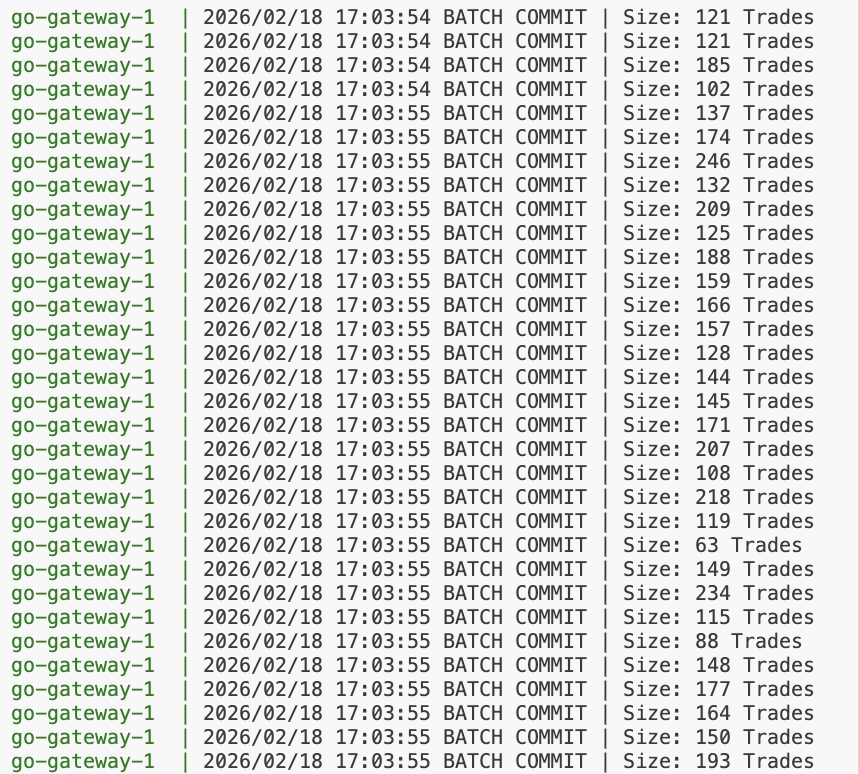

The settlement gateway, written in Go, bridges the execution engine to a PostgreSQL ledger. We enforce financial integrity through strict ACID transactions. Balance updates utilize row-level locking (SELECT FOR UPDATE) to isolate concurrent trades involving the same user ID, effectively serializing conflicting state changes at the database level. To mitigate the write amplification caused by the Postgres Write-Ahead Log (WAL) during high-throughput bursts (10k+ TPS), we implemented a micro-batching mechanism.

COPY protocol. The variance in batch size is because of the randomization employed in the ingress engine, meaning not all orders immediately have a match. This reduces disk I/O operations by > three orders of magnitude while maintaining durability guarantees.

Data serialization is handled via Protocol Buffers. We define a strict schema using uint64 and uint32 types to enforce fixed-point arithmetic, preventing floating-point errors which are not allowed in financial software. The entire stack is orchestrated via Docker Compose, utilizing named volumes to bypass the virtiofs limitation on Unix Domain Sockets within macOS environments.